Linked Data Management Service

For viewing the documentation on the methods exposed by the Linked Data Management Service please visit this URL.

The documentation which follows (and can be found here), contains some information on how to publish Relational or XML-based Data as Linked Data within the InGeoCloudS platform.

IMPORTING MECHANISMS OVERVIEW

The Linked Data Management Service (LDMS) offers three possible ways on which provider data can be imported into the underlying Triple Store. The first is a direct way while the rest are indirect ways. In the first way, the data provider has full control of the data import & update process where he/she first creates a LD specification out of his/her original data and then imports this specification through calling the ldimport method of the LDMS service. Subsequently, any modifications performed on the original data should be reflected on the respective LD imported by issuing SPARUL statements through the ldupdate method of the LDMS service. This direct importing way is suitable in the following cases: (a) static data only exist and need to be imported to the underlying triple store, (b) the data provider wants to publish only particular parts of his/her data sets as LD, and (c) the data provider desires to update asynchronously the LD with respect to modifications occurring to the respective, original data.

The second importing way assumes that the usual case hold where the provider's data are in a relational form. In this case, the provider has first to define a R2RML [1] mapping between his/her data model and the GSOM model (see Deliverable D2.2 and especially its appendix for a complete analysis of GSOM) and then register the mapping through calling the addR2RMLMappings method of the LDMS service. Through the latter registration, a uni-directional synchronization mechanism is created which ensures that only LD are created from the original relational data but also that updated on the relational data are propagated to the respective LD. This importing way is suitable for the following cases: (a) the data provider does not desire to have the control of the actual LD import & update process as he/she may not have the appropriate resources or even the appropriate expertise, (b) the generated LD should always be up-to-date as this might be critical for the provider's applications, (c) the data provider has many related data sets but has not yet integrated them. The latter case reveals that this import way enables the integration of the provider's data sets not only with each other but also with respect to all other related data sets imported by the other data providers of the InGeoCloudS platform. It also guarantees the appropriate visibility of the provider's data sets through the conduction of the appropriate cross-provider queries via the respective LDMS service methods. Please note that by considering also the application cases of the first import way, this import way can also catter for the case where the provider publishes only part of his/her original data as long as this is indicated/specified in the R2RML mapping specification registered.

The third importing way assumes that the provider's data are in XML form and considers a XSLT [2] mapping specification between the provider's data model and the GSOM model in order to transform them and store them in LD form in the underlying Triple Store. In particular, after the data provider generates the XSLT mapping specification, he/she just invokes the respective addXSLMappings method of the LDMS service with input this specification and his/her data zipped in order to import his/her data. In this way, in contract to the previous import way, there is no uni-directional synchronization mechanism between the provider's data and the respective LD generated. On the contrary, the data provider is responsible for this synchronization as he/she should re-invoke the addXSLMappings method when new data are created or create the respective SPARUL statements and invoke the ldupdate method when the original, XML-based data have been modified. Thus, this import way is suitable when provider data are XML-based and these data are either static or just extended with new insertions. The data can also be dynamic with modifications and deletions but this means that the data provider should be able to update the LD respectively, when such changes occur, thus having the appropriate resources and experience to perform this.

Let us now concentrate on the procedure and required user steps that are involved in the latter indirect data import ways in the following sub-sections.

[1] http://www.w3.org/TR/r2rml/

[2] http://www.w3.org/TR/xslt

DOCUMENTATION ON HOW-TO PUBLISH RELATIONAL DATA AS LINKED DATA THROUGH THE INGEOCLOUDS API

Data provided by the data-providers are usually described and stored following the relational paradigm. On the other hand in order to publish those data as Linked (Open) Data we need to describe them using RDF and models that follow RDF/S. Thus we need a process, which if followed, will allow any data provider to map and subsequently transform those data into the corresponding Linked Data. This process is described below by providing the necessary steps, which have to be followed in order to generate the corresponding Linked Data based on the Relational Data provided by any data provider. This process will work similarly for data in other structured formats like data stored in XML or Excel.

- Firstly, Virtuoso needs to be connected to the relational data source either indirectly by copying the relational data from that source or indirectly by linking to the source. The second option is usually preferable, since we avoid replication and can provide capabilities for supporting updates as we discuss later but the first option does exist for providers that do not want to provide live access to their data.

- Secondly, the concepts and relationships contained in the relational data have to be mapped into corresponding concepts and relationships from the Geo-Scientific Observation Model. This model is analyzed in Deliverable D2.2 along with explanations over the mappings that have been performed from notions of the partners’ relational data to those of the model.

-

Thirdly and after having created the mappings, we formalize them using the R2RML

language and register them in the Triplestore (currently Virtuoso) using the method

addR2RMLMappings from our API

analyzed in D3.2. R2RML is a W3C recommended language for expressing customized

mappings from relational databases to RDF datasets and Virtuoso is the only Triple

Store, among the candidate ones, which supports this language. As a result, by

having the relational data connected in Virtuoso and the corresponding mappings into

our GSOM expressed using R2RML, Virtuoso can then automatically create the

corresponding RDF data.

- The process followed to describe the partners’ relational data using the R2RML is also suitable when updates over these data occur. In fact, when there exist such R2RML mappings over relational data, Virtuoso creates particular triggers, namely RDB2RDF triggers, which are activated whenever changes over the relational data occur and update the semantic data accordingly.

- Please note that when the relational schema is updated by a data provider, the respective new R2RML mappings should be added into Virtuoso using the method addR2RMLMappings. In this way, Virtuoso creates the new RDB2RDF triggers and updates the RDF data accordingly.

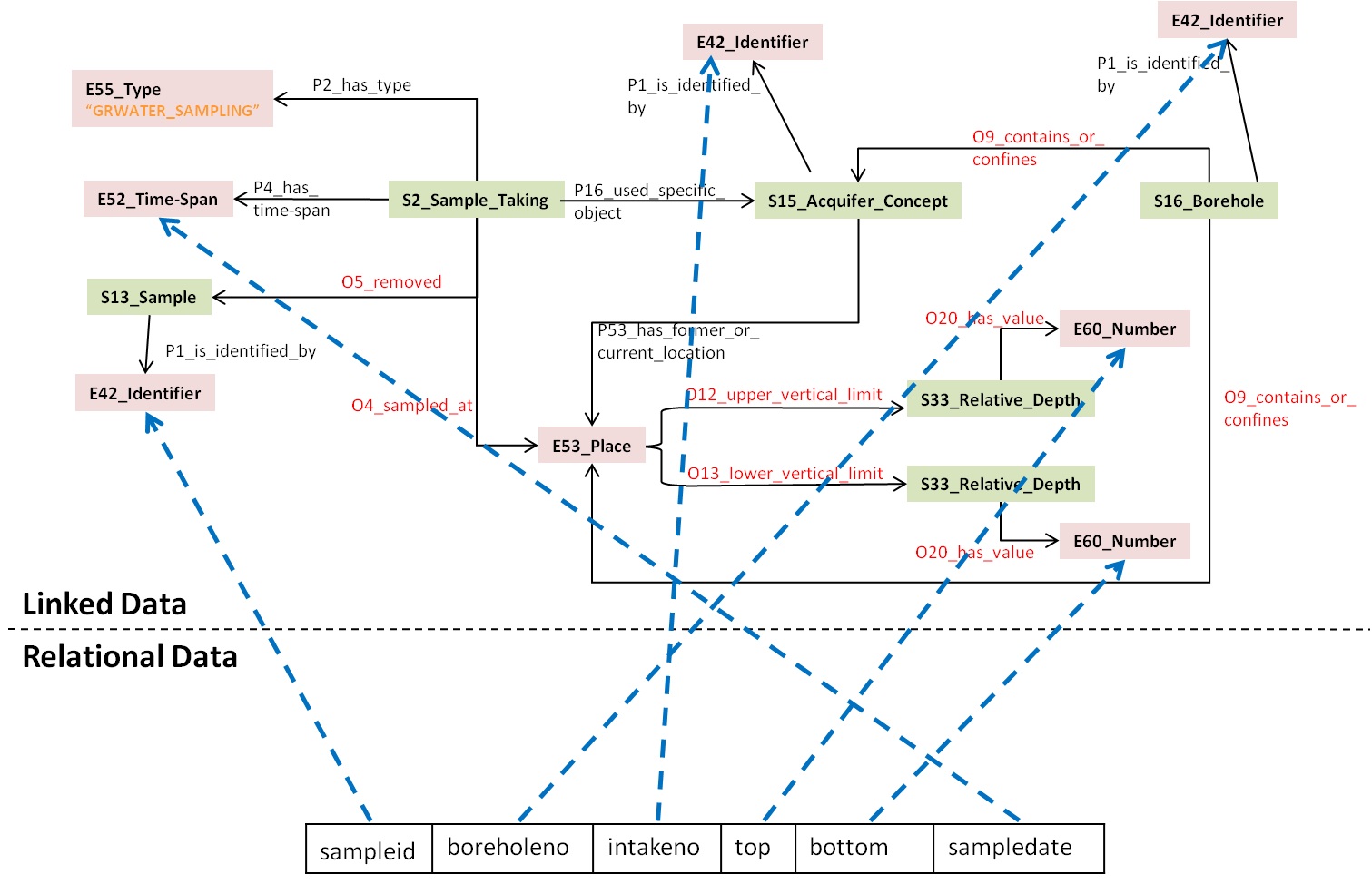

The above steps can be illustrated with the following scenario, which shows the steps we followed to generate the corresponding Linked Data from the Relational Data which came from the project partner GEUS and refer to the sample taking activity. As mentioned, the first step is to either insert or link Virtuoso to the Relational Data source of the data providers. In this scenario we have a single relational table containing ground water sample taking data as we can see in Figure 1.

Next, we map the relational data notions into notions of our Conceptual Model as we can see in Figure 1. For example the sampledate field can be mapped to the E52_Time-Span notion of the model, the boreholeno can be mapped to the E42_Identifier notion which is used to identify the borehole from which the sample was taken etc.

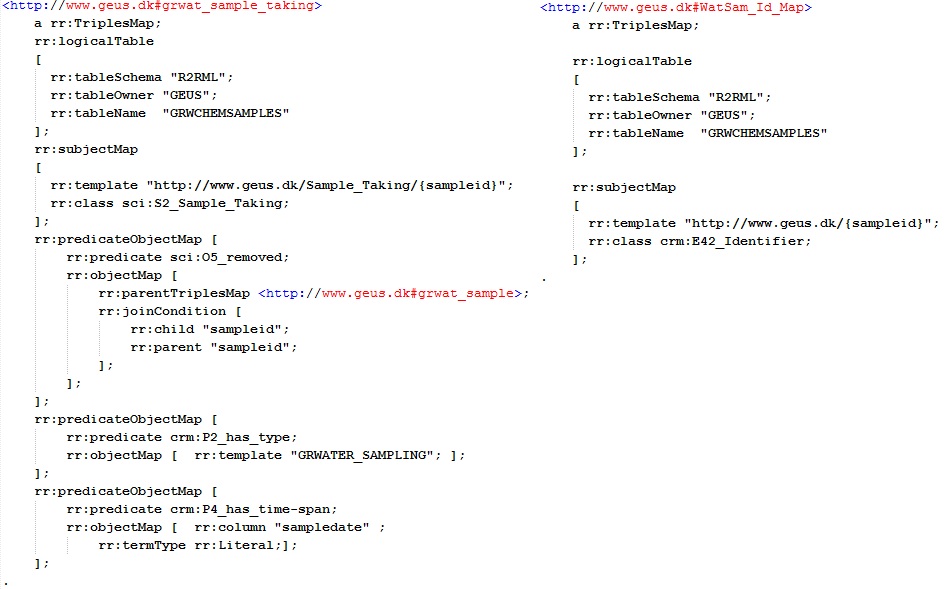

The final step is the formalization of the created mappings into R2RML language. Figure 2 shows a sample R2RML code, which is used to express the mappings for sampleid and sampledate.

Having created the R2RML mappings for the source relational schema, we call the addR2RMLMappings method of our API to register them into Virtuoso. At this point, the corresponding RDF data will be created and as we mentioned above, any changes on the Relational data will lead to the synchronization of the RDF data as well.

Figure 1. Mapping Relational to Linked Data

Figure 2. R2RML Mappings

DOCUMENTATION ON HOW-TO PUBLISH XML DATA AS LINKED DATA THROUGH THE INGEOCLOUDS API

While relational data import follows the approach where a particular mapping is generated and then advertized so as to create the appropriate uni-directional synchronization mechanisms, the XML-based data import must follow a slightly different process where the XML-based data are actually transformed and stored in the underlying Triple Store in one shot through the respect addXSLMappings method of the LDMS service. To this end, the process should also consider the cases where the provider's data are dynamically managed and modified. This process is described below by providing the necessary steps, which have to be followed in order to generate the corresponding Linked Data based on the XML-based Data provided of any data provider.

- First, the notions pertaining to the provider's data model should be mapped to the respective notions of the GSOM model similarly to the second step of the previous R2RML-based process.

- Second, this mapping should be formalized via XLST which implies that the data provider should not only have knowledge about the GSOM model but also experience on the way LD are represented in at least one format (e.g., Turtle or RDF/XML) as the mapping specification should explicate the way LD will be created and described based on the respective provider data.

- Third, after the XSLT specification is produced, the data provider should call the addXSLMappings method of the LDMS service with input the original data zipped, the XSLT specification and the URI of the LD graph to be generated. In this way, the method will unzip and transform all XML files into one LD specification (according to the (output) LD format used in the XSLT specification) which will be imported in the underlying Triple Store under the graph whose URI is designated in the method call.

-

Fourth, when updates are made to the original data, depending on the type of

modification, one of the following sub-steps can be performed:

- New data are generated: In this case, the new data should be collected and zipped into a single file so as to re-call again the addXSLMappings method with the remaining query parameters (XSLT content + graphURI) values be identical as those in the third step's method call.

- The old data are modified or deleted. In this case, it is better that the respective SPARUL statements are created and used as input to the ldupdate method of the LDMS service. An alternative would have been to drop the graphURI (i.e., the LD generated) and then re-import everything. However, this alternative would be suitable in cases where the data size is small and the updates are not frequently performed. Otherwise, it would lead to performance issues as well as unavailability of the provider's LD.

Let us not provide a specific scenario where the above process is applied for the case of the EPPO data provider which desires to import his/her XML-based data related to the earthquake management theme in the InGeoCloudS platform. First, the provider should create a (visual) mapping of his/her data model to GSOM. A partial mapping for this case is shown in Figure 3. As it can be seen, notions such as earthquakes and events are mapped to the respective notions of the GSOM Model.

Second, the provider should create a XSLT that realizes the data-to-GSOM-model mapping according to a specific LD format (N3). Figure 4 depicts a part of the XSLT mapping specification that could be generated based on the respective visual mapping of Figure 3. As it can be seen, earthquake and event data have been mapped into respective LD descriptions with GSOM-based content according to the N3 format.

Figure 4. Fragment of XSLT Mapping Specification between EPPO data model and GSOM

Third, the EPPO provider should invoke the addXSLMappings method with the XSLT content and a zipped file containing all provider data. This method will initially transform the provider data into LD specification in N3 and then store them in the underlying triple store. Figure 5 shows a small fragment of the LD specification mapping to the XSLT content presented in Figure 4.

Figure 5. Fragment of LD Specification produced after EPPO calls addXSLMappings method with the XSLT generated

Finally, when new earthquake data are being created, the EPPO provider will re-invoke the addXSLMappings method with the XSLT content and a zipped file containing only the new XML data.

- Log in to post comments